Linux搭建Hbase环境:

1.Hbase基本概念:

HBase的基本概念和定位

HBase是一个分布式的、面向列的开源数据库,该技术来源于 Fay Chang 所撰写的Google论文“Bigtable:一个结构化数据的分布式存储系统”。就像Bigtable利用了Google文件系统(File System)所提供的分布式数据存储一样,HBase在Hadoop之上提供了类似于Bigtable的能力。HBase是Apache的Hadoop项目的子项目。HBase不同于一般的关系数据库,它是一个适合于非结构化数据存储的数据库。另一个不同的是HBase基于列的而不是基于行的模式。

HBase – Hadoop Database,是一个高可靠性、高性能、面向列、可伸缩的分布式存储系统,利用HBase技术可在廉价PC Server上搭建起大规模结构化存储集群。

HBase的用途

-

海量数据存储

-

准实时查询

HBase的应用场景及特点

-

交通

-

金融

-

电商

-

移动(电话信息)等

HBase的特点

-

容量大

HBase单表可以有上百亿行、百万列,数据矩阵横向和纵向两个维度所支持的数据量级都非常具有弹性。

2. 面向列

HBase是面向列的存储和权限控制,并支持独立检索。列式存储,其数据在表中是按照某列存储的,这样在查询只需要少数几个字段的时候,能大大减少读取的数据量。

-

多版本

HBase每一个列的数据存储有多个Version(version)。

-

稀疏性

为空的列并不占用存储空间,表可以设计的非常稀疏。

-

扩展性

底层依赖于HDFS

-

高可靠性

WAL机制保证了数据写入时不会因集群异常而导致写入数据丢失:Replication机制保证了在集群出现严重的问题时,数据不会发生丢失或损坏。而且HBase底层使用HDFS,HDFS本身也有备份。

7.高性能

底层的LSM数据结构和Rowkey有序排列等架构上的独特设计,使得HBase具有非常高的写入性能。region切分、主键索引和缓存机制使得HBase在海量数据下具备一定的随机读取性能,该性能针对Rowkey的查询能够达到毫秒级别。

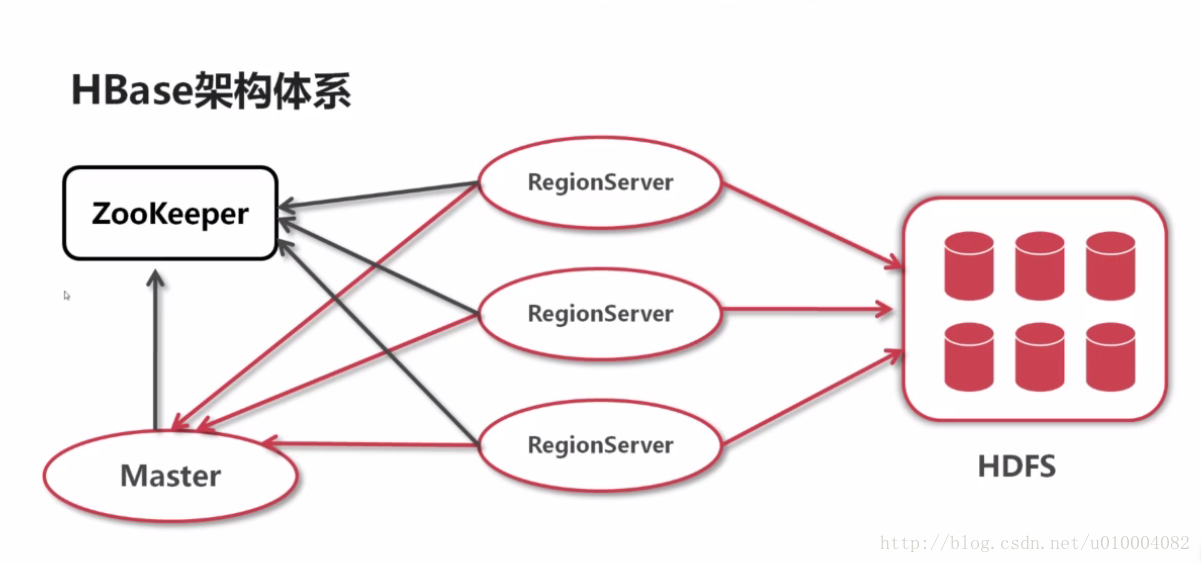

HBase架构体系与设计模型

HBase架构体系

2.Hbase环境搭建

【1】官网【http://hbase.apache.org/】下载Hbase安装包:hbase-1.4.3-bin.tar.gz

【2】利用Xftp5工具把【hbase-1.4.3-bin.tar.gz】上传到服务器Hbase目录:/usr/local/hbase

【3】利用Xhell5工具登录到服务器,并且进入到:cd /usr/local/hbase

Connecting to 192.168.3.4:22...

Connection established.

To escape to local shell, press 'Ctrl+Alt+]'.

Last login: Wed Apr 4 10:28:30 2018

[root@marklin ~]# cd /usr/local/hbase

【4】输入tar -xvf 命令解压安装包:tar -xvf hbase-1.4.3-bin.tar.gz

[root@marklin hbase]# tar -xvf hbase-1.4.3-bin.tar.gz

[root@marklin hbase]# ll

total 110240

drwxr-xr-x. 7 root root 160 Apr 4 10:53 hbase-1.4.3

-rw-r--r--. 1 root root 112883512 Apr 4 10:46 hbase-1.4.3-bin.tar.gz

[root@marklin hbase]#

【5】配置Hbase环境变量:输入:vim /etc/profile

#Setting NODE_HOME PATH

export NODE_HOME=/usr/local/nodejs/node-v9.10.1

export PATH=${PATH}:${NODE_HOME}/bin

export HBASE_HOME=/usr/local/hbase/hbase-1.4.3

export PATH=${PATH}:${HBASE_HOME}/bin

export HBASE_MANAGES_ZK=false

PS:Hbase环境安装需要Zookeeper和Java开发环境的支持,本机操作已经默认安装Zookeeper和Java开发环境以及Hadoop环境,以便于后期Hadoop和Hbase整合

【6】利用Xshell5工具登录连接到Linux服务器,进入: cd /usr/local/hbase/hbase-1.4.3

[root@marklin ~]# cd /usr/local/hbase/hbase-1.4.3

[root@marklin hbase-1.4.3]# ll

total 776

drwxr-xr-x. 4 root root 4096 Mar 21 13:12 bin

-rw-r--r--. 1 root wheel 213809 Mar 21 13:12 CHANGES.txt

drwxr-xr-x. 2 root root 178 Mar 21 13:12 conf

drwxr-xr-x. 12 root root 4096 Mar 21 20:20 docs

drwxr-xr-x. 7 root root 80 Mar 21 20:12 hbase-webapps

-rw-r--r--. 1 root wheel 261 Mar 21 20:24 LEGAL

drwxr-xr-x. 3 root root 8192 Apr 4 10:53 lib

-rw-r--r--. 1 root wheel 143082 Mar 21 20:24 LICENSE.txt

-rw-r--r--. 1 root wheel 404470 Mar 21 20:24 NOTICE.txt

-rw-r--r--. 1 root wheel 1477 Mar 21 13:12 README.txt

[root@marklin hbase-1.4.3]#

【7】Habse配置文件修改

进入hbase的conf目录,需要修改三个文件:hbase-env.sh、hbase-site.xml和regionservers:

(1).修改配置文件hbase-site.xml:

<configuration>

<property>

<name>hbase.rootdir</name>

</property>

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<property>

<name>hbase.tmp.dir</name>

<value>/usr/local/hbase/repository/data/tmp</value>

</property>

<property>

<name>zookeeper.session.timeout</name>

<value>120000</value>

</property>

<property>

<name>hbase.master.maxclockskew</name>

<value>150000</value>

</property>

<property>

<name>hbase.zookeeper.quorum</name>

</property>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>hbase.master</name>

</property>

</configuration>

(2) 修改hbase-env.sh:

# export JAVA_HOME=/usr/java/jdk1.6.0/

export JAVA_HOME=/usr/local/java/jdk1.8.0_162

export HADOOP_HOME=/usr/local/hadoop/hadoop-2.7.5

export HBASE_HOME=/usr/local/hbase/hbase-1.4.3

# export HBASE_CLASSPATH=

export HBASE_CLASSPATH=/usr/local/hadoop/hadoop-2.7.5/etc/hadoop

export HBASE_PID_DIR=//usr/local/hbase/repository/pids

export HBASE_LOG_DIR=/usr/local/hbase/repository/logs

# export HBASE_MANAGES_ZK=true

export HBASE_MANAGES_ZK=false

【8】启动测试Hbase:

启动Hbase需要Hadoop和Zookeeper支持,启动顺序:hadoop hdfs—> zookeeper——>hbase :

[1]启动Hadoop,输入:hdfs namenode -format

[root@marklin ~]# hdfs namenode -format

18/04/28 13:37:02 INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = marklin.com/192.168.3.4

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.7.5

STARTUP_MSG: classpath = /usr/local/hadoop/hadoop-2.7.5/etc/hadoop:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-compress-1.4.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-cli-1.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jettison-1.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/curator-framework-2.7.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-digester-1.8.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/httpclient-4.2.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/hadoop-auth-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jersey-server-1.9.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/mockito-all-1.8.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-httpclient-3.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jersey-core-1.9.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/xmlenc-0.52.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jersey-json-1.9.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/curator-client-2.7.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/avro-1.7.4.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-net-3.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/log4j-1.2.17.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/gson-2.2.4.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/hamcrest-core-1.3.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-io-2.4.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-configuration-1.6.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/activation-1.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jets3t-0.9.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/hadoop-annotations-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jetty-util-6.1.26.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-collections-3.2.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/zookeeper-3.4.6.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jsch-0.1.54.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-math3-3.1.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/servlet-api-2.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-logging-1.1.3.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jsr305-3.0.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/xz-1.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jetty-sslengine-6.1.26.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/guava-11.0.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/httpcore-4.2.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/junit-4.11.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/paranamer-2.3.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/netty-3.6.2.Final.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jsp-api-2.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/asm-3.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/stax-api-1.0-2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-codec-1.4.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/jetty-6.1.26.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/htrace-core-3.1.0-incubating.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/lib/commons-lang-2.6.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/hadoop-common-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/hadoop-common-2.7.5-tests.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/common/hadoop-nfs-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-io-2.4.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/guava-11.0.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/asm-3.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/hadoop-hdfs-2.7.5-tests.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/hdfs/hadoop-hdfs-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/guice-3.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/commons-cli-1.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jettison-1.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-server-1.9.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-core-1.9.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-json-1.9.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/log4j-1.2.17.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/commons-io-2.4.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/activation-1.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/commons-collections-3.2.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/javax.inject-1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-client-1.9.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/servlet-api-2.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/xz-1.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/guava-11.0.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/zookeeper-3.4.6-tests.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/asm-3.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/aopalliance-1.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/commons-codec-1.4.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/jetty-6.1.26.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/lib/commons-lang-2.6.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-api-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-registry-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-client-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-tests-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-common-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-common-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/guice-3.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/hadoop-annotations-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/javax.inject-1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/xz-1.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/junit-4.11.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/asm-3.2.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.5-tests.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.7.5.jar:/usr/local/hadoop/hadoop-2.7.5/contrib/capacity-scheduler/*.jar

STARTUP_MSG: build = https://shv@git-wip-us.apache.org/repos/asf/hadoop.git -r 18065c2b6806ed4aa6a3187d77cbe21bb3dba075; compiled by 'kshvachk' on 2017-12-16T01:06Z

STARTUP_MSG: java = 1.8.0_162

************************************************************/

18/04/28 13:37:02 INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT]

18/04/28 13:37:02 INFO namenode.NameNode: createNameNode [-format]

18/04/28 13:37:03 WARN common.Util: Path /usr/local/hadoop/repository/hdfs/name should be specified as a URI in configuration files. Please update hdfs configuration.

18/04/28 13:37:03 WARN common.Util: Path /usr/local/hadoop/repository/hdfs/name should be specified as a URI in configuration files. Please update hdfs configuration.

Formatting using clusterid: CID-7c7c3820-9596-4e47-9c3a-486568b4f052

18/04/28 13:37:03 INFO namenode.FSNamesystem: No KeyProvider found.

18/04/28 13:37:03 INFO namenode.FSNamesystem: fsLock is fair: true

18/04/28 13:37:03 INFO namenode.FSNamesystem: Detailed lock hold time metrics enabled: false

18/04/28 13:37:03 INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit=1000

18/04/28 13:37:03 INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true

18/04/28 13:37:03 INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.000

18/04/28 13:37:03 INFO blockmanagement.BlockManager: The block deletion will start around 2018 Apr 28 13:37:03

18/04/28 13:37:03 INFO util.GSet: Computing capacity for map BlocksMap

18/04/28 13:37:03 INFO util.GSet: VM type = 64-bit

18/04/28 13:37:03 INFO util.GSet: 2.0% max memory 889 MB = 17.8 MB

18/04/28 13:37:03 INFO util.GSet: capacity = 2^21 = 2097152 entries

18/04/28 13:37:03 INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false

18/04/28 13:37:03 INFO blockmanagement.BlockManager: defaultReplication = 2

18/04/28 13:37:03 INFO blockmanagement.BlockManager: maxReplication = 512

18/04/28 13:37:03 INFO blockmanagement.BlockManager: minReplication = 1

18/04/28 13:37:03 INFO blockmanagement.BlockManager: maxReplicationStreams = 2

18/04/28 13:37:03 INFO blockmanagement.BlockManager: replicationRecheckInterval = 3000

18/04/28 13:37:03 INFO blockmanagement.BlockManager: encryptDataTransfer = false

18/04/28 13:37:03 INFO blockmanagement.BlockManager: maxNumBlocksToLog = 1000

18/04/28 13:37:03 INFO namenode.FSNamesystem: fsOwner = root (auth:SIMPLE)

18/04/28 13:37:03 INFO namenode.FSNamesystem: supergroup = supergroup

18/04/28 13:37:03 INFO namenode.FSNamesystem: isPermissionEnabled = false

18/04/28 13:37:03 INFO namenode.FSNamesystem: HA Enabled: false

18/04/28 13:37:03 INFO namenode.FSNamesystem: Append Enabled: true

18/04/28 13:37:04 INFO util.GSet: Computing capacity for map INodeMap

18/04/28 13:37:04 INFO util.GSet: VM type = 64-bit

18/04/28 13:37:04 INFO util.GSet: 1.0% max memory 889 MB = 8.9 MB

18/04/28 13:37:04 INFO util.GSet: capacity = 2^20 = 1048576 entries

18/04/28 13:37:04 INFO namenode.FSDirectory: ACLs enabled? false

18/04/28 13:37:04 INFO namenode.FSDirectory: XAttrs enabled? true

18/04/28 13:37:04 INFO namenode.FSDirectory: Maximum size of an xattr: 16384

18/04/28 13:37:04 INFO namenode.NameNode: Caching file names occuring more than 10 times

18/04/28 13:37:04 INFO util.GSet: Computing capacity for map cachedBlocks

18/04/28 13:37:04 INFO util.GSet: VM type = 64-bit

18/04/28 13:37:04 INFO util.GSet: 0.25% max memory 889 MB = 2.2 MB

18/04/28 13:37:04 INFO util.GSet: capacity = 2^18 = 262144 entries

18/04/28 13:37:04 INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033

18/04/28 13:37:04 INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes = 0

18/04/28 13:37:04 INFO namenode.FSNamesystem: dfs.namenode.safemode.extension = 30000

18/04/28 13:37:04 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.window.num.buckets = 10

18/04/28 13:37:04 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users = 10

18/04/28 13:37:04 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.windows.minutes = 1,5,25

18/04/28 13:37:04 INFO namenode.FSNamesystem: Retry cache on namenode is enabled

18/04/28 13:37:04 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is 600000 millis

18/04/28 13:37:04 INFO util.GSet: Computing capacity for map NameNodeRetryCache

18/04/28 13:37:04 INFO util.GSet: VM type = 64-bit

18/04/28 13:37:04 INFO util.GSet: 0.029999999329447746% max memory 889 MB = 273.1 KB

18/04/28 13:37:04 INFO util.GSet: capacity = 2^15 = 32768 entries

18/04/28 13:37:04 INFO namenode.FSImage: Allocated new BlockPoolId: BP-1722456751-192.168.3.4-1524937024204

18/04/28 13:37:04 INFO common.Storage: Storage directory /usr/local/hadoop/repository/hdfs/name has been successfully formatted.

18/04/28 13:37:04 INFO namenode.FSImageFormatProtobuf: Saving image file /usr/local/hadoop/repository/hdfs/name/current/fsimage.ckpt_0000000000000000000 using no compression

18/04/28 13:37:04 INFO namenode.FSImageFormatProtobuf: Image file /usr/local/hadoop/repository/hdfs/name/current/fsimage.ckpt_0000000000000000000 of size 321 bytes saved in 0 seconds.

18/04/28 13:37:04 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid >= 0

18/04/28 13:37:04 INFO util.ExitUtil: Exiting with status 0

18/04/28 13:37:04 INFO namenode.NameNode: SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at marklin.com/192.168.3.4

************************************************************/

【2】启动Hbase服务,输入:start-hbase.sh

[root@marklin ~]# start-hbase.sh

running master, logging to /usr/local/hbase/repository/logs/hbase-root-master-marklin.com.out

marklin.com: running regionserver, logging to /usr/local/hbase/repository/logs/hbase-root-regionserver-marklin.com.out

【3】输入:jps

[root@marklin ~]# jps

1122 QuorumPeerMain

1043 QuorumPeerMain

5413 SecondaryNameNode

6665 Jps

5580 ResourceManager

6268 HMaster

5085 NameNode

5709 NodeManager

6413 HRegionServer

5230 DataNode

1119 QuorumPeerMain

【4】输入:habse shell

[root@marklin ~]# hbase shell

HBase Shell

Use "help" to get list of supported commands.

Use "exit" to quit this interactive shell.

Version 2.0.0-beta-2, r9e9b347d667e1fc6165c9f8ae5ae7052147e8895, Fri Mar 2 13:29:06 PST 2018

Took 0.0044 seconds

hbase(main):001:0> status

1 active master, 0 backup masters, 1 servers, 0 dead, 2.0000 average load

Took 0.4714 seconds

hbase(main):002:0> exit

【5】浏览器输入:http://192.168.3.4:16010/master-status#compactStas

相关推荐

在本篇文章中,我们将详细介绍如何在Linux环境下搭建Hadoop 2.6和HBase 1.2集群。该教程涵盖了从环境准备、Hadoop与HBase的安装配置到集群的测试等全过程。通过以下步骤,读者可以了解到不同运行模式下的具体操作...

在构建大数据处理环境时,Hadoop、HBase、Spark和Hive是四个核心组件,它们协同工作以实现高效的数据存储、处理和分析。本教程将详细介绍如何在Ubuntu系统上搭建这些组件的集群。 1. **Hadoop**:Hadoop是Apache...

HBase伪分布式运行环境 本文档旨在指导读者在单一节点上搭建HBase伪分布式运行环境,包括Hadoop集群的搭建和...通过按照本文档中的步骤操作,读者可以成功搭建HBase伪分布式运行环境,用于测试和开发HBase应用程序。

搭建一个完整的Hadoop单机版、HBase单机版以及Pinpoint与SpringBoot的整合环境,需要对大数据处理框架和微服务监控有深入的理解。在这个过程中,我们将涉及到以下几个关键知识点: 1. **Hadoop单机版**:Hadoop是...

本文旨在详细解析《Nut开发环境搭建》中提及的虚拟机环境下Hadoop、Zookeeper及HBase的开发环境搭建流程,涵盖从虚拟机安装到各组件配置的全过程。 #### 二、虚拟机与操作系统安装 首先,需在本地计算机上安装...

首先,安装HBase前,确保你的Linux系统已经安装了Java开发环境(JDK),因为HBase依赖于Java运行。推荐使用Oracle JDK 8或OpenJDK 8,因为HBase 1.4.10与这些版本兼容。安装JDK后,需要设置`JAVA_HOME`环境变量。 ...

安装HBase-1.2.2时,首先需要确保系统已经安装了Java开发环境(JDK)和Hadoop。然后,将压缩包解压到指定目录,配置HBase的环境变量,如HBASE_HOME、JAVA_HOME和HADOOP_CONF_DIR。接下来,需要修改HBase的配置文件`...

整体来看,安装OpenTSDB单机环境的整个过程涉及到了多个组件的配置和测试,包含了对操作系统环境的搭建、Java环境的配置、Zookeeper的安装和配置、HBase的安装以及OpenTSDB的安装和测试。每个步骤都需要细心操作,...

开发环境搭建 Data Source Data Transformation Data Sink 窗口模型 状态管理与检查点机制 Standalone 集群部署 六、HBase 简介 系统架构及数据结构 基本环境搭建 集群环境搭建 常用 Shell 命令 Java API 过滤器详解...

这个配置过程是一个相对复杂的任务,需要对Linux系统管理、网络配置、Java开发环境以及Hadoop、Hbase、Zookeeper的原理有深入理解。同时,还需要关注安全性和性能优化,例如限制不必要的网络访问,调整内存和CPU资源...

熟悉Linux环境将有助于理解和配置大数据软件,如Hadoop、Hive、HBase和Spark等,减少遇到问题时的困扰。 在大数据框架部分,Hadoop生态系统是核心。Hadoop包含HDFS(分布式文件系统)、MapReduce(批量数据处理)和...

Hadoop生态中还包括MapReduce、Hive、Pig、HBase等组件,它们的安装和配置通常在Hadoop环境搭建完成后进行: 1. MapReduce:Hadoop的核心计算框架,负责任务调度和执行。 2. Hive:基于Hadoop的数据仓库工具,提供...

- **Linux开发环境搭建**:在进行HBase Java编程之前,首先需要在一个稳定的Linux环境中搭建好开发平台。这包括安装必要的软件(如JDK、Hadoop等)、配置HBase环境变量以及确保所有依赖项都正确安装并配置完毕。此外...

- **单机模式**:适用于开发测试环境,便于快速搭建和使用。 - **分布式模式**:适用于生产环境,能够提供更高的吞吐量和可用性。 #### 二、配置管理 - **Configuration Files**(配置文件): - `hbase-site....

#### 二、配置与环境搭建 - **Java环境**:需要正确安装Java环境。 - **操作系统**:建议使用稳定的操作系统版本,如Linux。 - **Hadoop环境**:HBase依赖于Hadoop提供的存储层(HDFS)和计算框架(MapReduce)。 - ...

7. Linux命令行操作:上述过程涉及到很多Linux命令行操作,比如vi或vim命令用于编辑配置文件,tar命令用于解压缩安装包,mv命令用于重命名文件和文件夹,以及修改环境变量时需要编辑/etc/profile文件等。 8. 分布式...

整合Eclipse开发环境是为了方便编写和调试Hadoop或HBase的应用程序。这通常涉及到以下步骤: 1. **安装Eclipse**: 确保已安装适合Java开发的Eclipse版本。 2. **安装插件**: 安装必要的插件,如Hadoop插件或HBase...