Apache Hadoop YARN – NodeManager

The NodeManager (NM) is YARN’s per-node agent, and takes care of the individual compute nodes in a Hadoop cluster. This includes keeping up-to date with the ResourceManager (RM), overseeing containers’ life-cycle management; monitoring resource usage (memory, CPU) of individual containers, tracking node-health, log’s management and auxiliary services which may be exploited by different YARN applications.

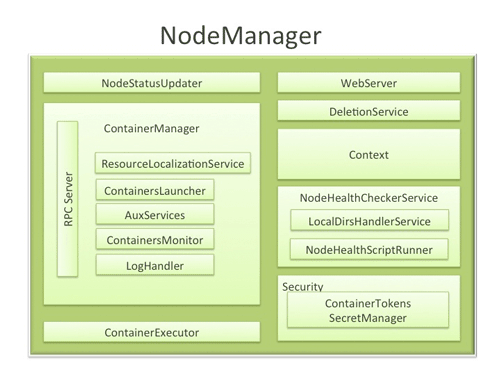

NodeManager Components

- NodeStatusUpdater

On startup, this component registers with the RM and sends information about the resources available on the nodes. Subsequent NM-RM communication is to provide updates on container statuses – new containers running on the node, completed containers, etc.

In addition the RM may signal the NodeStatusUpdater to potentially kill already running containers.

- ContainerManager

This is the core of the NodeManager. It is composed of the following sub-components, each of which performs a subset of the functionality that is needed to manage containers running on the node.

- RPC server: ContainerManager accepts requests from Application Masters (AMs) to start new containers, or to stop running ones. It works with ContainerTokenSecretManager (described below) to authorize all requests. All the operations performed on containers running on this node are written to an audit-log which can be post-processed by security tools.

- ResourceLocalizationService: Responsible for securely downloading and organizing various file resources needed by containers. It tries its best to distribute the files across all the available disks. It also enforces access control restrictions of the downloaded files and puts appropriate usage limits on them.

- ContainersLauncher: Maintains a pool of threads to prepare and launch containers as quickly as possible. Also cleans up the containers’ processes when such a request is sent by the RM or the ApplicationMasters (AMs).

- AuxServices: The NM provides a framework for extending its functionality by configuring auxiliary services. This allows per-node custom services that specific frameworks may require, and still sandbox them from the rest of the NM. These services have to be configured before NM starts. Auxiliary services are notified when an application’s first container starts on the node, and when the application is considered to be complete.

- ContainersMonitor: After a container is launched, this component starts observing its resource utilization while the container is running. To enforce isolation and fair sharing of resources like memory, each container is allocated some amount of such a resource by the RM. The ContainersMonitor monitors each container’s usage continuously and if a container exceeds its allocation, it signals the container to be killed. This is done to prevent any runaway container from adversely affecting other well-behaved containers running on the same node.

- LogHandler: A pluggable component with the option of either keeping the containers’ logs on the local disks or zipping them together and uploading them onto a file-system.

- ContainerExecutor

Interacts with the underlying operating system to securely place files and directories needed by containers and subsequently to launch and clean up processes corresponding to containers in a secure manner.

- NodeHealthCheckerService

Provides functionality of checking the health of the node by running a configured script frequently. It also monitors the health of the disks specifically by creating temporary files on the disks every so often. Any changes in the health of the system are notified to NodeStatusUpdater (described above) which in turn passes on the information to the RM.

- Security

- ApplicationACLsManagerNM needs to gate the user facing APIs like container-logs’ display on the web-UI to be accessible only to authorized users. This component maintains the ACLs lists per application and enforces them whenever such a request is received.

- ContainerTokenSecretManager: verifies various incoming requests to ensure that all the incoming operations are indeed properly authorized by the RM.

- WebServer

Exposes the list of applications, containers running on the node at a given point of time, node-health related information and the logs produced by the containers.

Spotlight on Key Functionality

- Container Launch

To facilitate container launch, the NM expects to receive detailed information about a container’s runtime as part of the container-specifications. This includes the container’s command line, environment variables, a list of (file) resources required by the container and any security tokens.

On receiving a container-launch request – the NM first verifies this request, if security is enabled, to authorize the user, correct resources assignment, etc. The NM then performs the following set of steps to launch the container.

- A local copy of all the specified resources is created (Distributed Cache).

- Isolated work directories are created for the container, and the local resources are made available in these directories.

- The launch environment and command line is used to start the actual container.

- Log Aggregation

Handling user-logs has been one of the big pain-points for Hadoop installations in the past. Instead of truncating user-logs, and leaving them on individual nodes like the TaskTracker, the NM addresses the logs’ management issue by providing the option to move these logs securely onto a file-system (FS), for e.g. HDFS, after the application completes.

Logs for all the containers belonging to a single Application and that ran on this NM are aggregated and written out to a single (possibly compressed) log file at a configured location in the FS. Users have access to these logs via YARN command line tools, the web-UI or directly from the FS.

- How MapReduce shuffle takes advantage of NM’s Auxiliary-services

The Shuffle functionality required to run a MapReduce (MR) application is implemented as an Auxiliary Service. This service starts up a Netty Web Server, and knows how to handle MR specific shuffle requests from Reduce tasks. The MR AM specifies the service id for the shuffle service, along with security tokens that may be required. The NM provides the AM with the port on which the shuffle service is running which is passed onto the Reduce tasks.

Conclusion

In YARN, the NodeManager is primarily limited to managing abstract containers i.e. only processes corresponding to a container and not concerning itself with per-application state management like MapReduce tasks. It also does away with the notion of named slots like map and reduce slots. Because of this clear separation of responsibilities coupled with the modular architecture described above, NM can scale much more easily and its code is much more maintainable.

相关推荐

- `yarn.nodemanager.resource.memory-mb`: 指定每个NodeManager可管理的内存总量。 - `yarn.scheduler.minimum-allocation-mb`: 设置容器的最小内存分配。 - `yarn.scheduler.maximum-allocation-mb`: 设置容器的...

HDP 是 Hortonworks 公司的发行版,而 CDH 是 Cloudera 公司的 Hadoop 发行版。不同的发行版在架构、部署和使用方法上是一致的,不同之处仅在若干内部实现。 Hadoop 内核包括分布式存储系统 HDFS、资源管理系统 ...

3. **Hadoop发行版**:选择适合的Hadoop发行版,比如Apache Hadoop或者预配置的Hadoop发行版,如Cloudera CDH或Hortonworks HDP。对于初学者,推荐使用预配置的发行版,因为它们通常包含了所有必要的依赖和配置。 4...

在选择 Hadoop 版本时,用户可以选择社区版(如 Apache 提供的版本)或商业版(如 Cloudera、Hortonworks、MapR 等)。商业版通常提供额外的支持、集成服务和管理工具,适合企业级应用,而社区版则更适合开发者和...

在大数据领域,Hortonworks是一个知名的开源公司,专注于Apache Hadoop及相关开源项目的企业级支持。Hortonworks Data Platform(HDP)是其核心产品,提供了全面的数据管理解决方案。对于开发、测试和学习Hadoop生态...

压缩包中的 "hdp-test" 文件可能是一个针对HDP(Hortonworks Data Platform)的测试设置或配置,HDP是一个包含Hadoop和其他相关开源项目的商业发行版。在实际操作中,可能会包含特定的配置文件、测试数据或者脚本,...

在实际应用中,为了在Windows上更方便地使用Hadoop,许多人会选择安装预编译的Hadoop发行版,如Cloudera的CDH或者 Hortonworks的HDP,它们提供了Windows支持和图形化的管理界面。然而,如果你选择自己编译,不仅可以...

在本教程中,我们将探讨如何在CentOS 7操作系统上使用Ambari部署Hadoop高可用性(HA)集群。这个过程涉及到多个步骤,包括环境准备、安装Ambari、配置Ambari服务器以及安装和配置Hadoop组件。 首先,我们需要准备一个...

Redoop CRH 4.9 是北京红象云腾系统技术有限公司推出的一款大数据集群管理软件,它可以帮助用户更加便捷地管理Hadoop生态系统的各个组件,如HDFS、YARN、MapReduce等,实现集群的集中管理和监控。 ##### 1.2 关于本...